We’ve been taught to worry about stupid people in power. That’s the wrong fear. Broken systems don’t survive because nobody is smart enough to see through them. They survive because the people best-positioned to expose them are usually the people most rewarded for keeping them intact. The threat isn’t a shortage of intelligence, it’s intelligence that’s been bought—quietly and gradually, through incentives and status and the slow comfort of institutional belonging. This includes, it should be said, the intelligence of whoever is making this argument.

Intelligence Is Not Independence

There’s a flattering story we tell about smart people: that they’re more rational, less biased, better at following evidence wherever it leads. It’s mostly wrong. What high intelligence actually gives you is a better toolkit for rationalization. A dim man will defend a broken institution with bumper-sticker loyalty. A brilliant one will defend it with regressions, citations, and the borrowed vocabulary of whatever intellectual tradition his institution has colonized.

Jonathan Haidt’s research makes the method plain: moral and political reasoning is almost always post-hoc. We land on conclusions first—through self-interest, through identity, through who signs our checks—and then we construct arguments to justify them. The smarter you are, the more convincing those arguments become. But the conclusion was often baked in before the reasoning started.

This obviously doesn’t mean that smart people are always wrong. It means the correlation between intelligence and institutional loyalty is stronger than most smart people would like to admit.

Credentialism Defends Itself

Look at who fights the hardest to defend credential systems: the people inside them. This isn’t a complete indictment. Credentials sometimes track competence. Board-certified surgeons are, on average, better at surgery than uncertified ones. Joseph Stiglitz’s Nobel-winning work on information asymmetries offers the strongest intellectual case for credentialing. In markets where buyers can’t evaluate quality directly, certification can be a genuine system that improves outcomes. That argument deserves respect.

But Stiglitz’s own framework, followed honestly, predicts the capture problem too. When the credentialing body is controlled by the credentialed, when the licensed set the licensing terms, the system gets corrupted. It stops measuring competence and starts measuring compliance with whoever controls the gate. The Institute for Justice has documented that licensing now covers roughly a quarter of American workers, up from about five percent in the 50s. That expansion hasn’t tracked any identifiable increase in consumer harm from unlicensed practitioners. It has tracked, with remarkable consistency, the lobbying power of incumbent professionals. Stiglitz gave us the theory of why credentialing can work. The data shows what it usually becomes.

Bryan Caplan’s broader argument follows the same logic to a clearer conclusion: formal education largely signals conformity rather than compliance. If he’s right, every credentialed intellectual has a direct financial stake in his being wrong—and will argue accordingly, sincerely, because the mind is genuinely good at believing what it needs to believe.

Markets Capture Too

Here is where the argument needs to be honest with itself, or it’s just performing independence rather than practicing it. The credit rating agencies—Moody’s, Standard & Poor’s, Fitch—are private forms operating in competitive markets. In the years before 2008, they rated mortgage-backed securities stuffed with subprime loans as AAA—the highest possible grade, implying near-zero default risk. They weren’t government bureaucrats. They were tenured professors. They were profit-seeking private analysts who had built a business model around being paid by the issuers whose products they rated. The FCIC’s final report concluded that their failures were essential to the collapse.

The mechanism was identical to what this piece describes in the public sector: an expert class whose institutional survival depended on not seeing what was directly in front of them. Private accreditation bodies, industry self-regulatory organizations, and corporate-funded research all exhibit the same pattern. The form of the institution changes, but the incentive dynamic doesn’t.

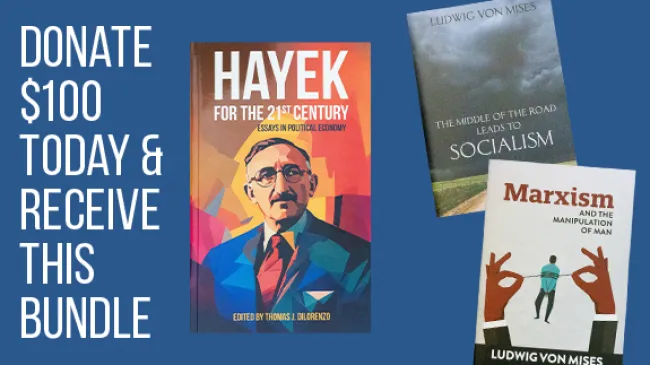

Hayek’s “The Use of Knowledge in Society” remains the strongest case for decentralized systems: they aggregate dispersed information that no central authority can possess. That’s true and important. But his argument is about the superiority of market processes over central planning—not a guarantee that market institutions are immune to the failure mode this essay describes. A market can correctly allocate resources and still produce a rating agency that calls toxic debt safe, because the rating agency’s revenue depends on saying so. Conflicting these failure modes weakens both arguments.

Failure Becomes Funding

In a real market, failure has consequences. In the expert class, failure works differently. When a program collapses, the lesson is almost never that the program should end. It needs more resources, better leadership, a new framework, a commission. Failure doesn’t shrink the expert’s jurisdiction; it expands it.

The War on Poverty requires precision here. Census Bureau historical data shows that rates did fall in the late 1960s—defenders of the program are right about that. What’s harder to defend is the subsequent arc: sustained expenditure with diminishing and contested returns, an administrative apparatus that grew regardless of outcomes, and a near-total institutional inability to conclude that less might produce more. The question isn’t whether any anti-poverty program ever helped anyone. The question is whether the institutions running them were structurally capable of recommending their own reduction. Sixty years of evidence suggests they weren’t.

Foreign policy follows the same pattern. Every botched nation-building exercise produces literature rather than accountability—papers, panels, and post-mortems—authored by the same people who designed said failure. The think tank doesn’t fold when its predictions are wrong. Instead, it holds a conference.

Julien Benda saw the shape of this a century ago in La Trahison des Clercs: a clerisy that had abandoned disinterested inquiry to serve class interest and institutional power. What Benda called treason, is now an ordinary career track. The betrayal doesn’t happen in a single dramatic moment. It happens grant by grant, promotion by promotion, until it is invisible to the person committing it.

Complexity as Camouflage

When institutional defenders start losing the argument on its merits, they make it more complicated. The school producing illiterate graduates doesn’t have a failure. It has “a multidimensional interaction of socioeconomic variables, historical inequalities, and implementation gaps.” The captured regulatory agency doesn’t have a corruption problem. It faces “a challenging balance between stakeholder engagement and enforcement mandate.” Some of that language reflects genuine complexity. Educational outcomes really are shaped by socioeconomic factors, and pretending otherwise is its own motivated reasoning.

But the tell is the asymmetry: complexity gets invoked reliably when institutions face criticism and disappears when they’re claiming credit. In Interventionism: An Economic Analysis, Mises explained that each intervention spawns distortions that seem to demand further interventions, producing an expert class whose authority grows in proportion to the problems that authority helped create. The complexity isn’t incidental, but load-bearing.

The Least-Owned Mind—And Its Limits

The person most likely to state the obvious about a broken institution is usually the one with the least to lose by saying it. Outsiders see what insiders have trained themselves not to see. But the argument has to turn on itself here, otherwise it proves nothing.

The tradition of thought this piece draws from—skeptical of concentrated authority, of expert consensus, of institutional permanence—has its own outlets to sustain its own audience whose assumptions it would be professionally uncomfortable to challenge. The claim that establishment experts systematically rationalize in their institutional interests is either a universal observation about human incentives or its special pleading. If it is universal, it applies here too.

What would falsify this thesis? If credentialed insiders regularly and voluntarily recused their own institutional authority in response to failure—dissolved programs, recommended deregulation of their own fields, published work that undermines their own status—that would be a serious problem for the argument. Such cases exist. They’re rare enough to be remarkable, which is itself data. But a theory that can’t account for the exceptions wouldn’t be a theory, it’d be a narrative.

Intelligence held by someone genuinely free to use it is formidable. Intelligence held by someone whose income and identity depend on a set of institutions remaining respectable is something else: the most sophisticated defense attorney as broken system has ever had. The danger isn’t that smart people can’t see what is wrong, it’s that they are smart enough to know exactly how to explain it away—and occasionally honest enough to catch themselves doing it.