Autonomous AI agents are becoming active economic participants on both sides of market transactions. Enterprise platforms now embed what vendors call “touchless operations,” with agents executing procurement decisions without human review. Blockchain networks let AI agents hold wallets, settle payments, and rebalance portfolios autonomously. Microsoft Research has already documented two-sided “agentic markets” where both buyers and sellers are AI proxies. The standard commentary praises the speed and consistency of it all. That praise is correct, and it misses something catastrophic: when both parties to a transaction are algorithms optimizing against pre-specified utility functions, the market ceases to do the one thing that justifies its existence; it ceases to discover genuine economic value.

Prices Are Discoveries, Not Coordinates

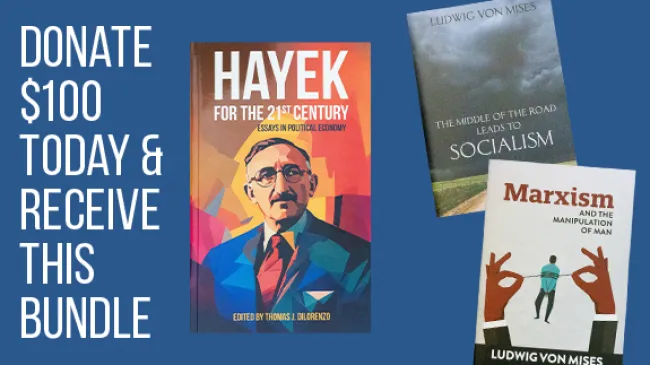

A price is not merely a number. In Friedrich Hayek’s formulation, it is a compressed signal encoding relative scarcity across an entire decentralized economy, synthesizing millions of individual valuations, constraints, and opportunity costs into information legible to strangers. His 1945 paper “The Use of Knowledge in Society” argued that the economic problem is fundamentally a problem of knowledge dispersed across billions of minds—tacit, context-specific, irreducibly personal—and that only the price system can transmit it without anyone having to know it all.

The crucial point is that prices do not merely transmit pre-existing information; they generate new information. The buyer who pays $12 rather than walk to a competitor reveals something about her preferences and opportunity costs that no algorithm could have extracted in advance. As the Cobden Centre’s 80th-anniversary analysis of Hayek’s paper notes, much of this knowledge is tacit, non-quantifiable, and discovered only in the act of exchange itself. Prices are epistemic events. Remove the human actors who generate them, and you do not have a faster market, you have a fundamentally different and diminished institution.

The Complete-Information Trap

When two AI agents negotiate, the buyer-agent has a utility function encoding budget, quality, and delivery parameters; the seller-agent has one encoding margins and capacity. They converge on a price satisfying both constraint sets. A number emerges—but nothing is discovered that was not already implicit in the objective functions both parties were assigned. The negotiation solves a coordination problem within a known parameter space.

Game-theoretically, this is the difference between complete-information games—where equilibria are computable in advance—and games of genuine uncertainty, where payoffs are partly constituted by the act of play. Markets are valuable precisely because they are the latter. The entrepreneur who launches a new product does not know what it is worth; neither does the consumer. The price that emerges is the discovery of a value that neither possessed before. Agent-to-agent markets—constrained by pre-specified utility functions—cannot do this. Israel Kirzner called the capacity to perceive profit opportunities that do not yet exist as recognized objects “entrepreneurial alertness.” That alertness, he argued, is the engine of economic growth. It cannot be encoded in an objective function. Autonomous agents are structurally incapable of it.

Goodhart’s Law at Market Scale

A second pathology emerges when agents dominate markets: the incentive to manipulate your counterpart’s objective function rather than compete on underlying value. If you know a procurement agent ranks vendors by a composite score of price, quality rating, and delivery speed, the optimal strategy is to game that score—not improve actual performance. This is Goodhart’s Law operating at market scale. Human buyers eventually notice and revise their frameworks. AI agents—running on fixed or slowly-updated objective functions—create stable exploit surfaces. A 2025 NBER working paper showed that autonomous reinforcement-learning trading algorithms can learn to coordinate and sustain supra-competitive profits without any explicit agreement or communication—emergent collusion that current antitrust frameworks, built around detecting explicit communication, are entirely unable to address.

The evolutionary dynamics are grim. Metric-gaming strategies outperform genuine-value strategies because they minimize actual performance costs while satisfying the score. As gaming proliferates, the metrics decay as proxies for value—but the AI evaluators keep using them. The market stabilizes at an equilibrium in which all agents game all metrics and the price signals contain no useful economic information.

The Evidence Already Here

This is not speculation. Digital advertising is the first major market in history where both buyers and sellers have been AI agents at scale for over a decade, and it functions as a live experiment in what happens when human preference revelation exits the picture. Spider Labs’ 2025 fraud report estimated losses exceeding $41.4 billion globally—up from $37.7 billion in 2024—with forecasts projecting $172 billion in losses by 2028. Between 20 and 30 percent of all digital ad spend is currently consumed by fraud—bots and click farms satisfying algorithmic targeting criteria in place of real human attention. Imperva’s 2025 Bad Bot Report found malicious bots now account for 37 percent of all internet traffic. Buying algorithms cannot distinguish genuine attention from bot signals that satisfy their criteria. Selling algorithms produce whatever signals buyers will pay for. The equilibrium is stable and catastrophic: billions circulate through a market not allocating advertising to human minds at all.

Efficiency Is the Wrong Defense

Mainstream economics has long defended markets on efficiency grounds. That defense is perennially vulnerable to the objection that powerful optimization algorithms can solve known allocation problems better than markets do—an objection that becomes harder to dismiss as algorithms grow more capable. But the efficiency framing misidentifies what markets are for. The Hayekian insight is not that markets efficiently use pre-existing information. It is that markets generate information that cannot exist before the market process itself. An agent-to-agent economy is, in this sense, closer to central planning than to a market economy—even if formally decentralized. Agents’ objective functions are set by human principals, just as planners set production targets. The negotiation executes those targets against each other. The appearance of exchange is preserved. The substance of discovery is not.

What Must Be Done

Human-in-the-loop approval for consequential exchanges should be treated as epistemic infrastructure, not bureaucratic drag. A 2025 Gartner survey found that 74 percent of IT leaders already identify fully-autonomous agents as new attack vectors—institutional resistance that should be deepened, not eroded. Antitrust regulation must evolve beyond detecting explicit communication: the NBER collusion research proves emergent coordination produces anti-competitive outcomes indistinguishable from cartel behavior while falling entirely outside current law. And economists must stop treating efficiency as the terminal value in market design. Efficiency is an instrument. Discovery is the purpose. The digital advertising catastrophe—a trillion-dollar market where a third of spend reaches no human mind—is not an efficiency story, it is a preview of what every automated market becomes when human beings stop being the agents who make it work.

The Hayekian case for markets was never a case for algorithms. It was a case for human beings—for the irreplaceable epistemic contribution of persons acting on local, tacit, personally-discovered knowledge that no objective function can encode. As autonomous agents proliferate, the question is not whether they are efficient. It is whether they preserve, or quietly extinguish, the one property that made markets worth defending: the capacity to tell us things we did not know before.